12 Scientific Predictions Once Ridiculed That Later Proved Accurate

Science has a complicated relationship with new ideas. The same institution that celebrates breakthroughs has, time and again, laughed them out of the room first. Some of the most transformative discoveries in human history were initially dismissed as fantasy, pseudoscience, or the delusional ravings of people who simply didn’t know better. It’s almost poetic, really.

What’s even more remarkable is how consistent this pattern is. From ancient Greece to modern laboratories, bold thinkers have paid a price for being right too early. So here are twelve scientific predictions that were once mocked, scorned, or outright buried – only to eventually reshape the world.

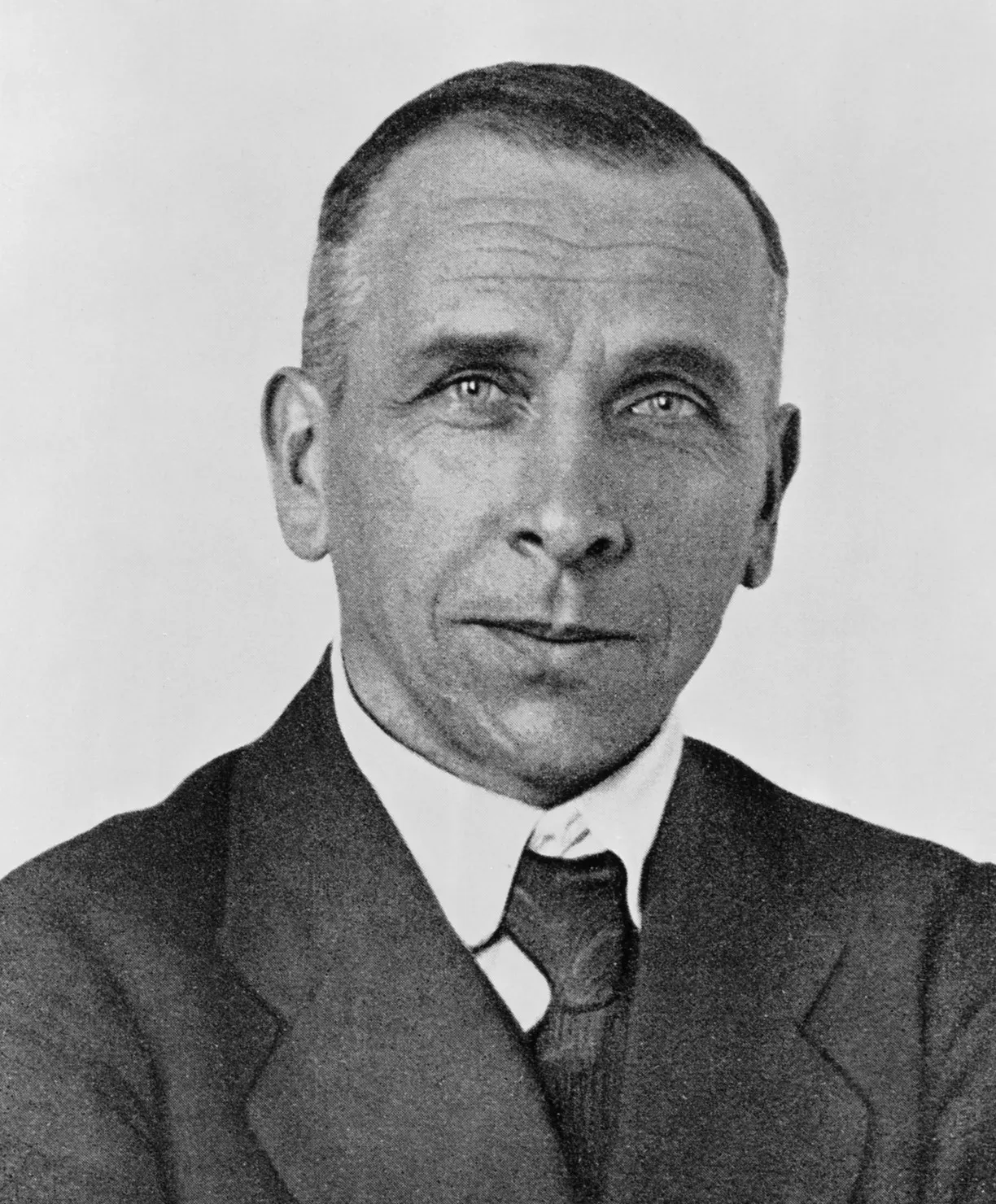

1. Alfred Wegener and the Drifting Continents

Imagine announcing to the world’s leading geologists that the ground beneath their feet has been slowly moving for hundreds of millions of years, and they’d look at you like you’d lost your mind. That’s exactly what happened to Alfred Wegener. On January 6, 1912, the 32-year-old German geophysicist and meteorologist proposed an extraordinary idea: Earth’s continents had once been a single landmass that had drifted apart.

Lingering anti-German sentiment intensified the attacks, but German geologists piled on too, scorning what they called Wegener’s “delirious ravings” and other symptoms of “moving crust disease and wandering pole plague.” The British and Americans were equally merciless. Part of the problem was that Wegener had no convincing mechanism for how the continents might move; he thought the continents were moving through the earth’s crust like icebreakers plowing through ice sheets.

Wegener first presented his idea of continental drift in 1912, but it was widely ridiculed and soon mostly forgotten. Wegener never lived to see his theory accepted – he died at the age of 50 while on an expedition in Greenland. In the 1950s, evidence started to trickle in that made continental drift a more viable idea. By the 1960s, scientists had amassed enough evidence to support the missing mechanism – namely, seafloor spreading – for Wegener’s hypothesis to be accepted as the theory of plate tectonics.

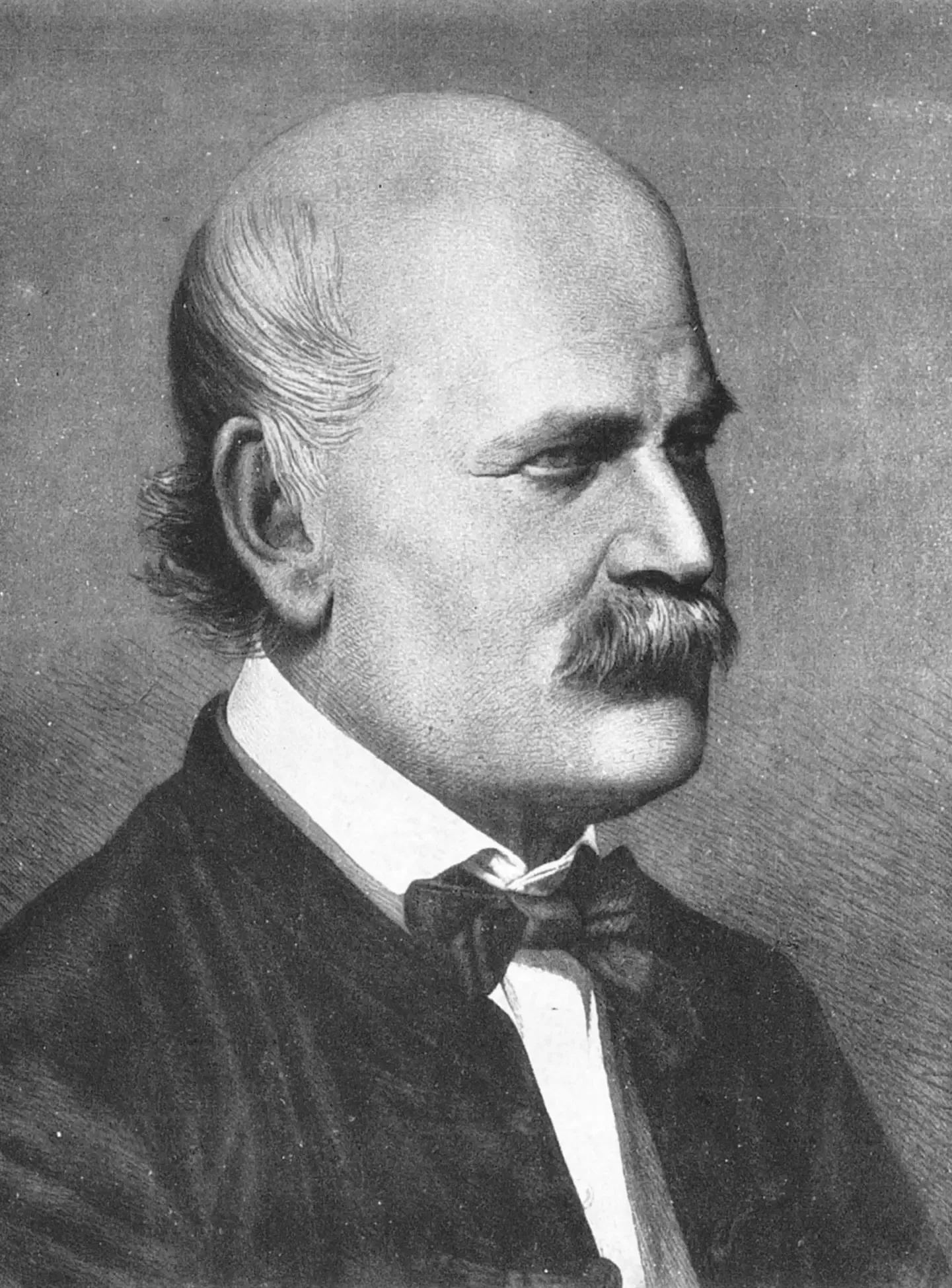

2. Ignaz Semmelweis and the Power of Handwashing

Here’s the thing – a man once proved that doctors were killing their own patients simply by not washing their hands. And they ignored him. In 1847, Ignaz Semmelweis identified the cause of childbed fever, an illness that claimed the lives of many new mothers. He realized that physicians were spreading infections and that handwashing could prevent the spread, making him a pioneer of antiseptic medical procedures.

Semmelweis immediately introduced a strict policy of handwashing, which included sterilizing the hands in a solution of chlorinated lime before initiating examinations of pregnant women. The results were dramatic: mortality rates plummeted from 18.27 percent in April to 0.19 percent by the end of the year. You’d think that would end the debate. It didn’t.

This significant discovery was not recognized in his lifetime; colleagues in the medical community refused to believe that they were causing patients to die through the transmission of infectious material. He initiated the germ theory of disease, a concept later advanced by Louis Pasteur, Joseph Lister, and Robert Koch. Semmelweis died in an asylum, broken by rejection. His ideas, however, are now the foundation of modern infection control.

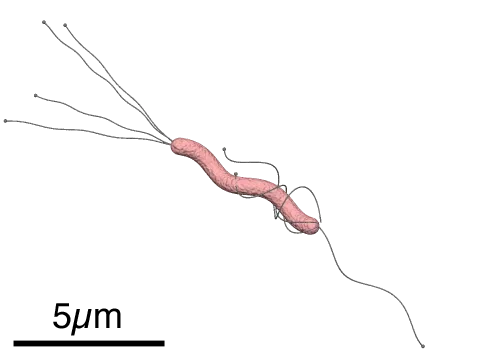

3. Barry Marshall and Stomach Ulcers Caused by Bacteria

For most of the twentieth century, doctors were absolutely certain that stomach ulcers were caused by stress, spicy food, and too much acid. Enter Barry Marshall and Robin Warren, who suggested something far more radical. Marshall and Robin Warren showed that the bacterium Helicobacter pylori plays a major role in causing many peptic ulcers, challenging decades of medical doctrine holding that ulcers were caused primarily by stress, spicy foods, and too much acid.

At the time when Warren and Marshall announced their findings, it was a long-standing belief in medical teaching and practice that stress and lifestyle factors were the major causes of peptic ulcer disease. Warren and Marshall rebutted that dogma, and it was soon clear that H. pylori causes more than 90% of duodenal ulcers and up to 80% of gastric ulcers. Nobody believed them at first.

Hoping to persuade skeptics, Marshall drank a culture of H. pylori and within a week began suffering stomach pain and other symptoms of acute gastritis. Stomach biopsies confirmed that he had gastritis and showed that the affected areas of his stomach were infected with H. pylori. Marshall subsequently took antibiotics and was cured. In 2005, the Karolinska Institute in Stockholm awarded the Nobel Prize in Physiology or Medicine to Marshall and Robin Warren for their discovery.

4. Gregor Mendel and the Laws of Heredity

Gregor Mendel is probably the most quietly devastating case of scientific neglect in history. A monk doing experiments in a garden turned out to have unlocked the fundamental rules of inheritance – and nobody noticed for decades. Gregor Mendel was a monk who founded the science of genetics. He was the first person to correctly identify the rules of heredity which determine how traits are passed through generations of living things. The importance of Mendel’s work was only properly appreciated in 1900, 16 years after his death, and 34 years after he first published it.

Mendel’s work on genetic inheritance wasn’t read by anyone during his life. This was certainly not due to lack of effort on his behalf. Even though Mendel attempted on many occasions to contact renowned scientists, they struggled to understand him and his theories. The irony stings even harder when you consider that it was said that Charles Darwin had a copy of Mendel’s paper, and if he had read it, the connection between evolution by natural selection and classical genetic hereditary would have been made much earlier.

Today, Mendel’s laws of segregation and independent assortment are taught in every biology classroom on earth. His pea plants, tended quietly in a monastery garden, became the bedrock of an entire scientific discipline. Sometimes the universe has a brutal sense of irony.

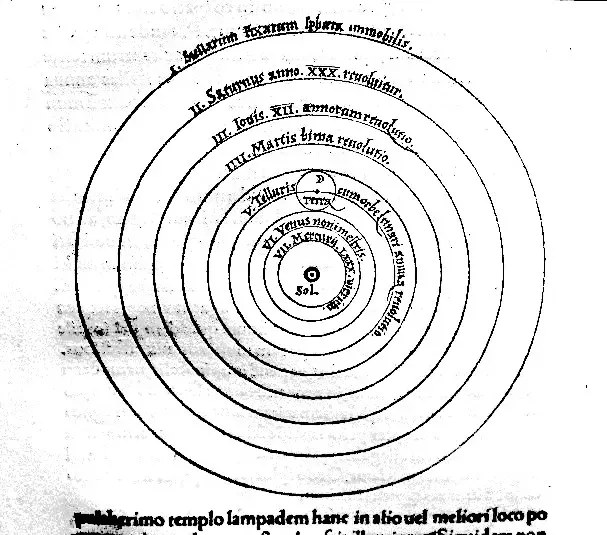

5. Nikolaus Copernicus and the Sun at the Center

Before the sixteenth century, the prevailing model placed Earth at the absolute center of everything. Anyone who dared suggest otherwise was risking more than just professional humiliation. Although the theory of heliocentrism had been variously proposed throughout ancient history, the concept reappeared with a bang when Renaissance astronomer Nicolaus Copernicus put it forward in the first half of the 16th century. It was met with scholarly interest, but it wasn’t long before religious leaders such as Martin Luther and the Catholic Sacred Congregation began criticising the work.

1,800 years after Aristarchus, Copernicus began the scientific revolution when he resurrected the idea that the earth and other planets orbit the sun. He published his work shortly before he died. Most astronomers who read Copernicus’s book agreed that it was excellent, but they did not believe it – most continued to believe that the earth is center of the universe.

Galileo took an interest in the idea of heliocentricity, a theory that had been put forward by Copernicus in the 16th century. Copernicus’s idea had been buried by religious leaders, but Galileo brought the idea back into the light in the early 1600s. Being put under house arrest didn’t stop Galileo from saying that the Earth and other planets orbited around the sun. That’s commitment to a hypothesis. Honestly, it’s remarkable.

6. Robert Goddard and the Liquid-Fueled Rocket

When Robert Goddard published his ideas about rockets that could travel through the vacuum of space, the New York Times editorial board publicly mocked him. The piece was dismissive, suggesting he didn’t understand basic physics. One of history’s more embarrassing editorial decisions, in retrospect. Although he experienced failure after failure, he kept at it, and on March 16, 1926, he achieved the first successful flight with a liquid-propellant rocket. That historic flight achieved an altitude of 41 feet and only lasted 2 seconds – but he proved it could be done.

Although the rocket’s flight only lasted for two seconds, it was enough to prove that he’d been correct in his assertions. Following on from that, he and his team launched 34 rockets between 1926 and 1941, some of which went as high as 1.6 miles. Still, the skeptics held firm – until they really couldn’t anymore.

On July 17, 1969 – three days before the first humans landed on the Moon – the New York Times retracted its editorial. The correction read: “Further investigation and experimentation have confirmed the findings of Isaac Newton in the 17th Century and it is now definitely established that a rocket can function in a vacuum as well as in an atmosphere.” Better late than never, one supposes.

7. Katalin Karikó and the mRNA Technology Behind COVID Vaccines

Few stories in recent scientific history are as striking as that of Katalin Karikó, the Hungarian-American biochemist who spent decades being dismissed, defunded, and demoted for believing mRNA could be used as medicine. A biochemist by training, she emigrated from Hungary to the US and faced many setbacks throughout her scientific career – rejected papers, denied grants – but she always persevered, eventually achieving global recognition.

Despite decades of doubt and dismissal, biochemist Katalin Karikó never gave up on the research that gave us mRNA COVID vaccines in record time. The mRNA platform she spent her career developing became the fastest vaccine technology ever deployed in response to a global pandemic. The scientific community that ignored her for years suddenly found itself relying on her work to survive one.

Karikó received the Nobel Prize for “discoveries concerning nucleoside base modifications that enabled the development of effective mRNA vaccines against COVID-19.” She and Drew Weissman shared the 2023 Nobel Prize in Physiology or Medicine. Let’s be real – there is a certain poetic justice in watching a career spent in obscurity end in Stockholm.

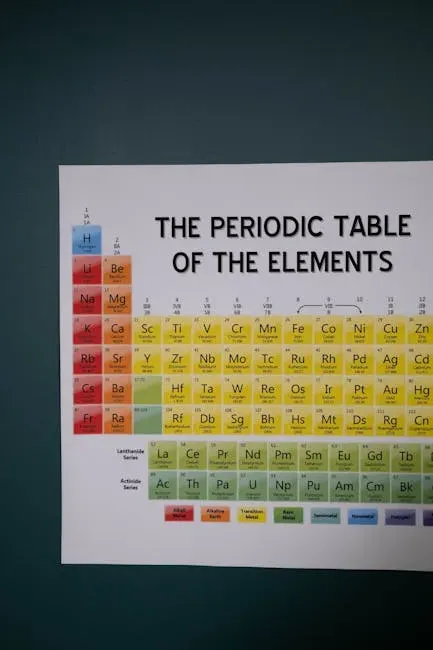

8. Gregor Mendeleev and His Gaps in the Periodic Table

In 1869, Dmitri Mendeleev published the first version of the periodic table of elements. It was bold enough on its own. Yet what made it truly audacious was what he did with the blank spaces. Mendeleev’s periodic table contained mysterious gaps that puzzled scientists in 1869. Rather than viewing these as errors, he confidently predicted that some new elements would fill them. His accurate forecasts of gallium and germanium properties later validated the periodic law and guided chemical discovery.

Most scientists at the time viewed those gaps as weaknesses in his model. They suggested his entire system might be wrong. Mendeleev insisted the elements simply hadn’t been discovered yet, and that when they were, they would fit his predicted properties almost exactly. That’s a remarkable leap of confidence.

When gallium was discovered in 1875 and germanium in 1886, their properties matched Mendeleev’s predictions with extraordinary precision. It silenced the critics almost immediately. The periodic table is now the single most important organizational tool in all of chemistry, and those once-mocked empty spaces are what proved it correct.

9. Edward Jenner and the Smallpox Vaccine

Edward Jenner noticed something curious in the late eighteenth century: milkmaids who contracted cowpox seemed protected from the far more deadly smallpox. He thought the connection was worth testing. Most of the medical establishment thought he was chasing a ridiculous folk belief. He tried his idea out in 1796 on eight-year-old James Phipps, infecting a cut with a small amount of cowpox pus. When the boy later proved immune to smallpox, Jenner had his first successful case study.

However, certain elements of society viewed the vaccine with distrust, and various criticisms and satirical pieces sprang up, most notably the satirical cartoon called ‘The Cow Pock,’ where the artist portrays people sprouting cows from their bodies after being given Jenner’s vaccine. The ridicule was intense, visceral, and very public. Imagining how cows would literally grow from people’s arms was apparently a concern that resonated.

Jenner’s work, however, survived the mockery. Vaccination went on to become one of the most powerful tools in public health history. Smallpox, a disease that once killed millions of people per decade, was officially declared eradicated by the World Health Organization in 1980. Jenner’s laughed-at cowpox experiment made that possible.

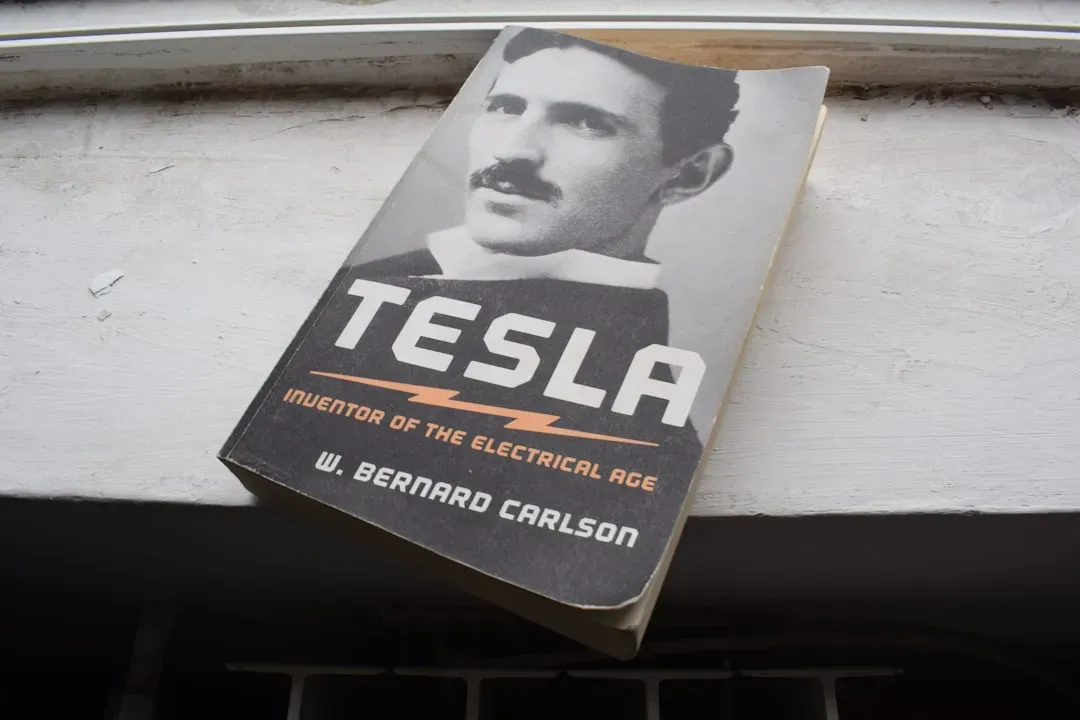

10. Nikola Tesla and Global Wireless Communication

Nikola Tesla envisioned a world where information could be transmitted wirelessly across the globe – without wires, without cables, through the air itself. In the early 1900s, that sounded less like science and more like something out of a fever dream. Tesla’s early 1900s vision of personal wireless devices connecting the world seemed impossibly futuristic. He described instant news access and interconnected global markets decades before the technology existed. Modern mobile networks and internet culture directly echo his systems-scale wireless communication predictions.

Tesla’s contemporaries, including many well-funded and well-connected rivals, dismissed his grand wireless transmission ideas as impractical. His famous Wardenclyffe Tower project was abandoned, and he died in 1943 largely broke and alone. Meanwhile, J.P. Morgan and others who might have backed him chose safer bets.

Walk around any city today and nearly every person is carrying a wireless device connected to a global communications network. That is, more or less, what Tesla described. The specific technical pathway differed, but the core prediction – universal wireless connectivity – proved not just accurate, but foundational to modern civilization.

11. The Ancient Atomists: Leucippus and Democritus

Around 400 BC, two Greek philosophers proposed something extraordinary: that all matter in the universe was made of tiny, indivisible particles. No instruments. No experiments. Just pure philosophical reasoning. Leucippus and Democritus imagined the universe made of tiny, indivisible particles. Two thousand years later, science proved them right with the discovery of the atom. Not bad for a couple of guys with no microscopes and a lot of imagination.

For most of those two thousand years, the dominant model – championed by Aristotle – described matter as composed of four fundamental elements: earth, water, fire, and air. Atomism was essentially dormant. The idea resurfaced meaningfully only when John Dalton proposed his atomic theory in 1808, backed by actual chemical evidence.

Today, atomic and subatomic physics underpin everything from medical imaging to nuclear energy to semiconductor technology. The modern smartphone in your pocket operates thanks to quantum mechanics, which is itself built on understanding matter at the atomic and sub-atomic scale. Democritus didn’t get the Nobel Prize, but the direction of his thinking proved more accurate than the consensus held for roughly two millennia.

12. Einstein’s General Relativity and the Prediction of Gravitational Waves

In 1916, Albert Einstein predicted – as a consequence of his general theory of relativity – that massive accelerating objects would create ripples in the fabric of spacetime itself. He called them gravitational waves. Even Einstein himself privately doubted they would ever be detected, given how unimaginably small the effect would be.

For nearly a century, gravitational waves remained a theoretical prediction with no direct observational evidence. Many physicists considered them real but practically undetectable, given the sensitivity of instruments required to measure them. The prediction sat in a kind of scientific limbo – accepted in theory, unproven in practice.

Then, in September 2015, the LIGO collaboration detected gravitational waves for the first time, produced by two colliding black holes more than a billion light-years away. The signal was real, and it matched Einstein’s predictions with stunning precision. In the case of gravitational waves, discoveries can be purely theoretical, with their material demonstrations and empirical confirmations significantly delayed. The detection earned the Nobel Prize in Physics in 2017 – confirming a prediction that had waited over a hundred years to be proven right.

—

Science, at its best, is not about consensus. It is about evidence, persistence, and the willingness to be wrong – or to stand firm when everyone says you are. The twelve stories above share a quiet lesson: ridicule is not refutation. Being laughed at, dismissed, or professionally destroyed has never once made a true thing false. What does that tell us about the ideas being dismissed in laboratories and journals right now? That’s the question worth sitting with.